Mar 24, 2026 | 10 minutes

Build reliable agents with Make MCP Toolboxes

How MCP Toolboxes give you control over what Claude, Cursor, and ChatGPT can do.

AI agents need more than access – they need precision

Claude and Cursor are quickly becoming go-to operating environments for developers, product managers, and technical leaders. And as the Model Context Protocol (MCP) gains traction, the expectation is clear: AI should do more than think. It should act – creating tickets, updating CRMs, triggering workflows, and modifying databases on your behalf.

But giving AI raw access to your systems isn’t orchestration but delegation without control.

Native MCP connections expose entire API surfaces, force the LLM to infer multi-step workflows, and inflate token usage in the process. Consider giving an LLM raw access to a platform like Confluence. It sounds productive – until the agent accidentally deletes a critical company page. And even without catastrophic errors, dumping a massive list of tools into the LLM's context window forces it to waste tokens just reading specs and figuring out which tool to call. That increases cost, slows execution, and raises the risk of the LLM selecting the wrong action entirely. Governance blind spots, unpredictable execution, and inflated spend – none of which belong in production environments.

Enterprise-grade AI requires purpose-built tools, deterministic execution, strict scoping, observability, and managed governance. That’s exactly what Make MCP Toolboxes deliver.

Introducing MCP Toolboxes: managed AI access at scale

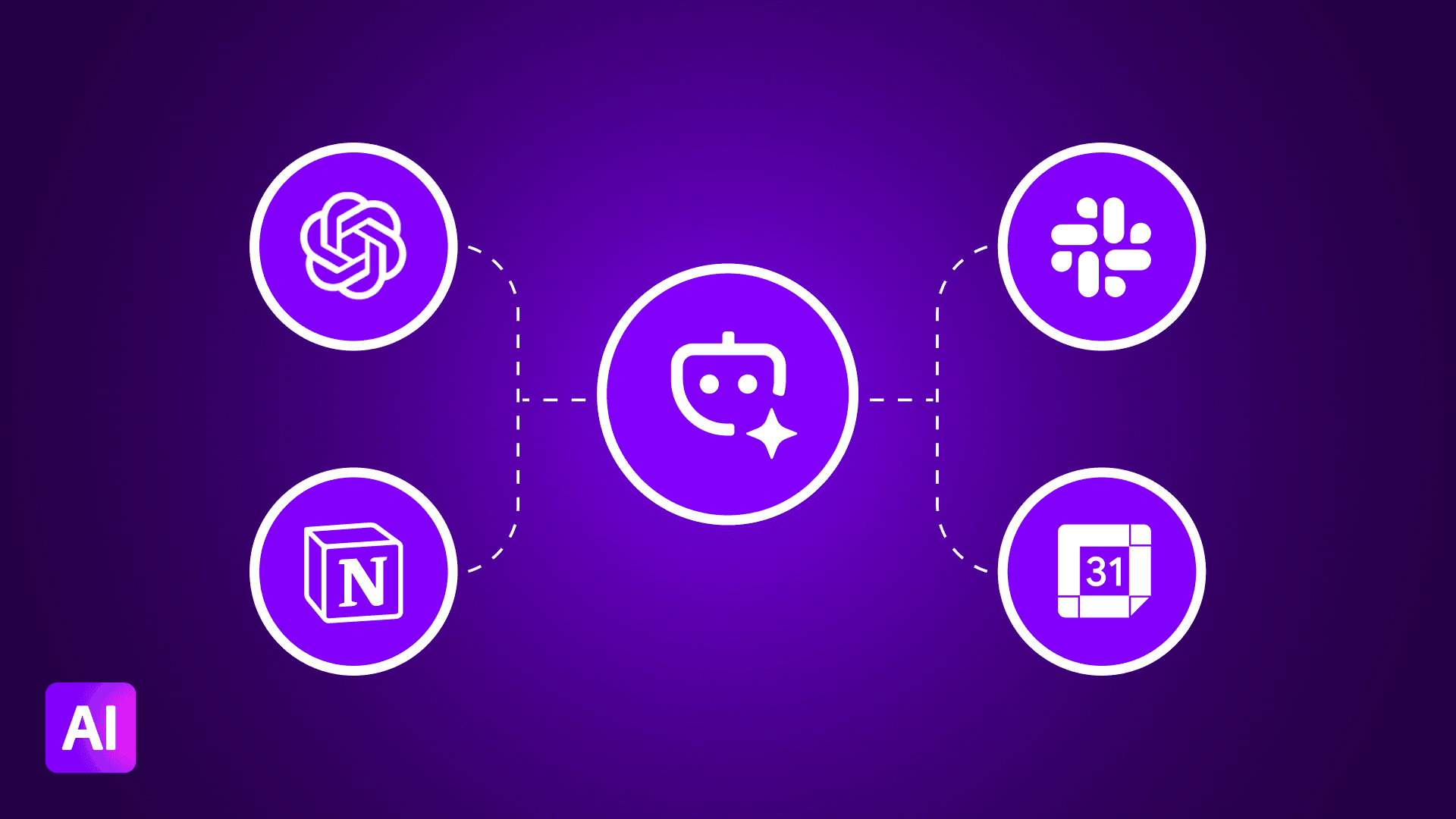

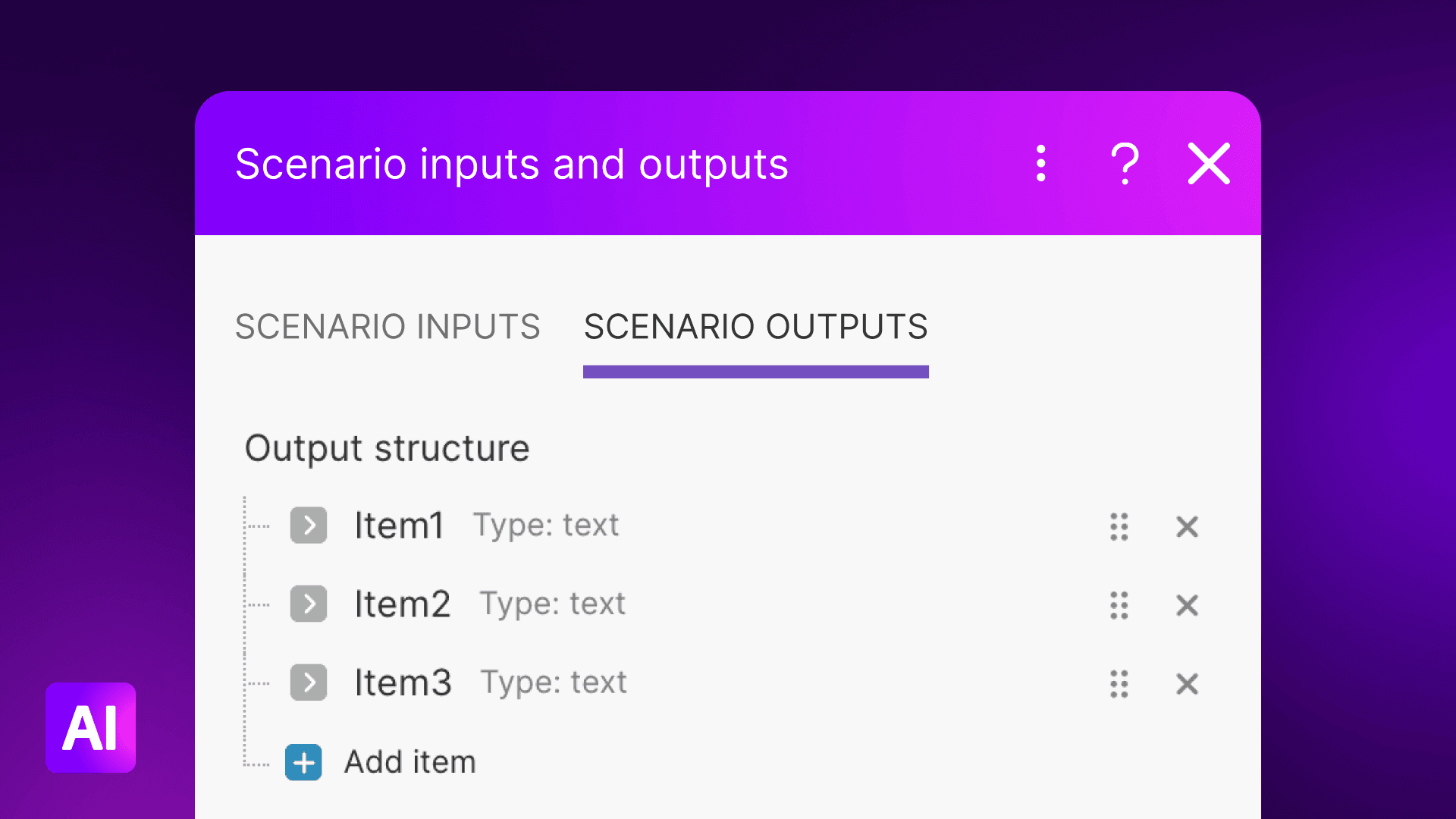

MCP Toolboxes are dedicated MCP servers that you create at the team level in Make. Rather than exposing your entire tech stack to an AI client, you select a specific subset of scenarios and publish them as callable tools.

Here’s what you get:

Tool management in Make: Add, configure, label, and delete tools from a centralized interface. Assign descriptions and designate each tool as read-only or read-and-write.

Token-based authorization: Generate multiple keys per toolbox. Each key limits access to only the tools in that specific toolbox – no shared credentials, no all-or-nothing exposure.

Unique URL per toolbox: Every toolbox gets its own endpoint, so you can power different AI agents or clients with entirely different toolsets.

Monitoring and visibility: Track tool usage, giving you a clear view of what tools have been called with what parameters and all actions taken.

The governance layer leaders need

Without MCP Toolboxes, teams often resort to workarounds – shared internal tokens, dummy accounts, overly broad API access – just to get their AI agents connected. The result is a patchwork of ungoverned access that’s difficult to audit and risky to maintain.

MCP Toolboxes change that equation:

You define exactly which tools are available to each agent

You limit parameters and scope at the scenario level

You audit every invocation through centralized monitoring

You eliminate cross-client data exposure with unique URLs and scoped tokens

For security-conscious organizations, this transforms AI from a potential liability into a governed operational system – one where every action is scoped, logged, and traceable.

Practical use cases

Deterministic orchestration with Claude

Instead of Claude reasoning through CRM logic step by step – searching, validating, creating, associating – you expose a single tool: "Onboard Customer." Behind the scenes, Make executes the full sequence: validation, contact creation, company association, deal setup, and notification triggers. Claude receives a clean confirmation response.

The benefits are tangible:

Lower token consumption – because the LLM isn't ingesting inputs and outputs from every intermediate step

Fewer hallucination risks – because the business logic is defined in Make, not inferred by the LLM

Guaranteed execution – because Make runs deterministic scenarios, not probabilistic guesses

A cleaner audit trail – because every scenario run is logged and visible in Make

Make absorbs orchestration complexity. Claude stays focused on reasoning.

Chain complex processes into a single tool

Say you want an LLM to research a topic on LinkedIn, compile the data, format a brief, and generate a new Google Doc. With a raw MCP connection, the AI has to reason through and execute every individual step – each one a chance for it to lose context or make a wrong call.

With an MCP Toolbox, you chain these actions into a single background process. You expose one tool to the LLM. Behind the scenes, Make deterministically handles the multi-step workflow – from gathering the data to creating the document – and returns the final Google Doc URL directly to your chat interface.

More ways teams are using MCP Toolboxes

Bypass native connector limits. Native integrations in LLM clients like Claude often restrict you to a single account per app. You can connect your work Slack, but not your personal or community accounts at the same time. With an MCP Toolbox, you build a centralized tool that bridges multiple accounts – querying data across five different Slack communities or searching both personal and work inboxes in a single prompt, with the toolbox routing the action to the right account.

Turn your LLM into a live testing sandbox. Testing and optimizing scenarios typically means tedious cycles of manually triggering webhooks and checking execution logs. With an MCP Toolbox, advanced builders can turn Claude into a live sandbox for their Make scenarios. Expose a scenario as a tool and rapidly A/B test by passing different variables – swapping AI models, testing text inputs, adjusting parameters – directly through the chat interface. Run a scenario a hundred times without ever leaving the conversation.

How to create your first MCP Toolbox

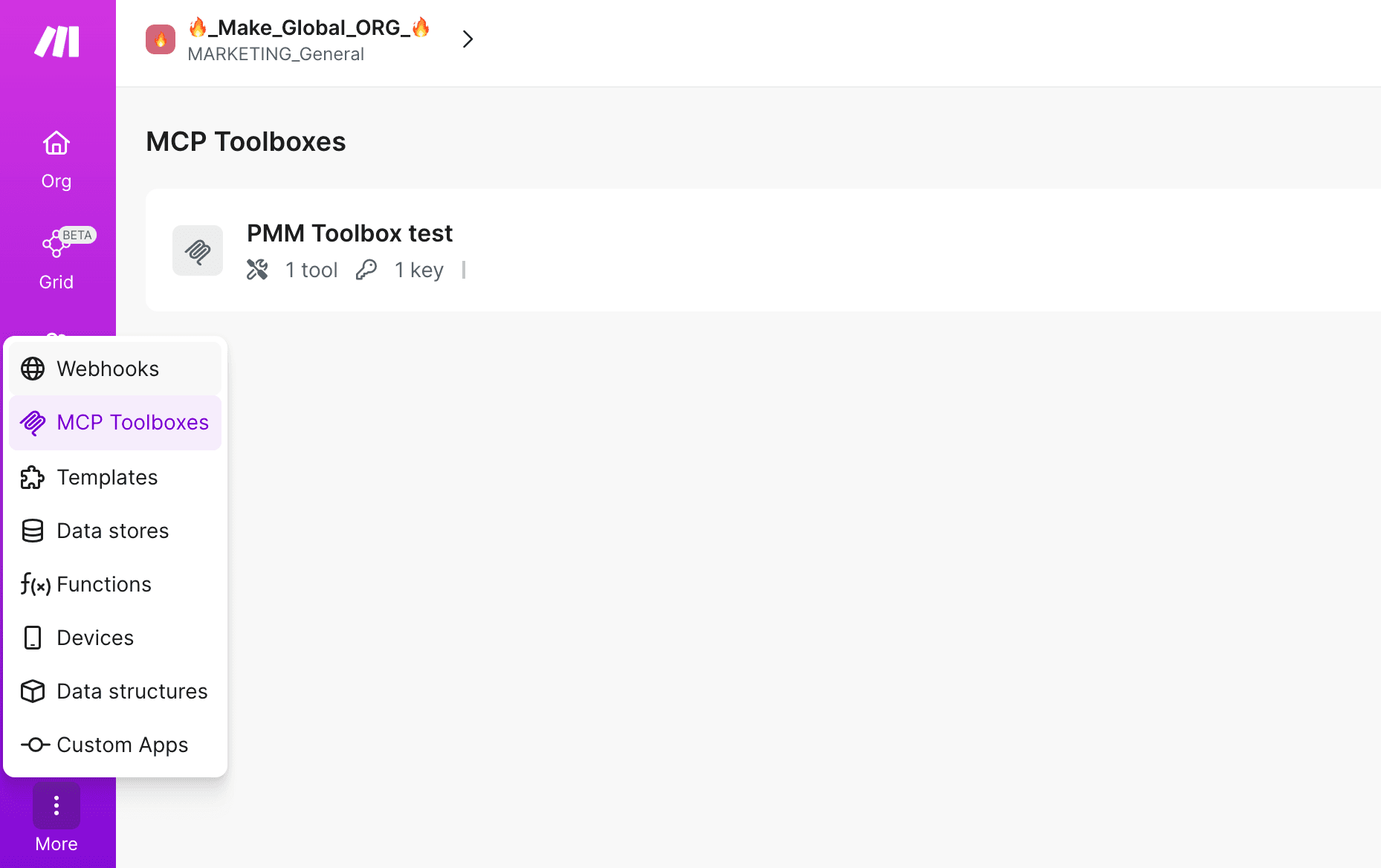

In Make, click MCP Toolboxes in the left sidebar, then Create toolbox at the top.

Name your toolbox, then select the tools you want to expose in Tools. Only active scenarios with on-demand scheduling appear in the tool list.

Click Create. In the Create key dialog, copy and securely store your key.

Click Close, then copy the URL from MCP Server URL.

Use the URL and key to connect from Claude, Cursor, ChatGPT, or any MCP-compatible client.

For detailed setup instructions, including connecting to specific clients like Claude Desktop, check out our MCP Toolboxes documentation and the Make Developer Hub.

Raw MCP vs. Make MCP Toolboxes

MCP is a protocol. It defines how AI clients communicate with external systems. That’s valuable – but on its own, it doesn’t address the operational challenges that come with deploying AI in a business context.

When you connect an AI client directly to a raw MCP server, the AI has to interpret the full MCP surface, infer which tools to call and in what order, and hope it gets the sequence right. With Make MCP Toolboxes, you define the workflows. The AI simply triggers them.

Here’s how that plays out across different contexts:

Compared to direct app MCP servers: With a direct connection, the AI guesses workflows. With Make, you define them. Your scenarios encode business logic, error handling, and multi-step sequences that no LLM should be inventing on the fly.

Compared to agent-first platforms: Agent-first tools focus on the reasoning layer. Make focuses on tool reliability and governance – making sure the actions your agents take are predictable, auditable, and correct.

Compared to code-only frameworks: Custom code is flexible but hard to audit at scale. Make’s visual Scenario Builder provides built-in logs, error handling, and operational controls that keep pace with growing complexity.

Why teams trust Make for AI orchestration

Make doesn’t compete with Claude, Cursor, or ChatGPT. Make empowers them. Here’s what that looks like at an organizational level:

Deterministic execution: Scenarios run consistently. No guessing, no variation, no hallucinated steps.

Scoped access control: Each toolbox contains only the tools you choose to expose. Different agents, different toolsets, different keys.

Reduced hallucination risk: Business logic lives in Make, not in the LLM’s prompt context. The AI triggers; Make delivers.

Observability and logging: Every tool call is tracked. Every scenario run is visible. You know exactly what your agents are doing.

Secure credential handling: Your AI clients never touch underlying API credentials. Make manages connections to your apps and services securely.

Controlled combination of reasoning and execution: The LLM thinks. Make acts. Each layer does what it does best.

Define the logic. Let the AI trigger it.

If you’re connecting Claude or Cursor directly to raw MCP servers, you’re trusting an LLM to invent your business logic. That might work for prototyping, but it won’t hold up in production.

MCP Toolboxes give you a better path: define what your AI agents can do in Make’s visual Scenario Builder, scope their access with dedicated toolboxes, and let Make handle the execution. You get reliability, governance, and observability – without sacrificing flexibility.

Get started

Create your first MCP Toolbox at the team level

Read the technical documentation on the Make Developer Hub