Apr 20, 2026 | 8 minutes

RPA vs. agentic AI: What's the difference and which should you use?

Your RPA bots break when interfaces change. Learn when to use RPA vs agentic AI, how it earns its place, and how to build a hybrid automation program with Make that handles both

A vendor updates their portal layout over the weekend, and by Monday morning, your inbox is full of failed operation alerts. The bot that reliably pulled invoices for the last two years cannot find the download button because the HTML tag changed.

Nothing moves until someone submits a ticket, a developer investigates, and the bot is patched. It is a pattern Gartner has documented across finance departments, identifying implementation failure as one of the most consistent challenges in scaling RPA programs.

Treating RPA and agentic AI as competitors misses the real question. It is not about replacing what you have built.

It is about knowing which parts of your process need to follow fixed rules, and which parts break because of them.

What is RPA vs agentic AI?

Robotic process automation (RPA) mimics human interactions with software interfaces and APIs. It executes structured, rule-based processes. If the input is predictable and the environment is stable, RPA moves data with perfect fidelity.

It is built for stability, not flexibility. It does not make judgments. It follows instructions exactly.

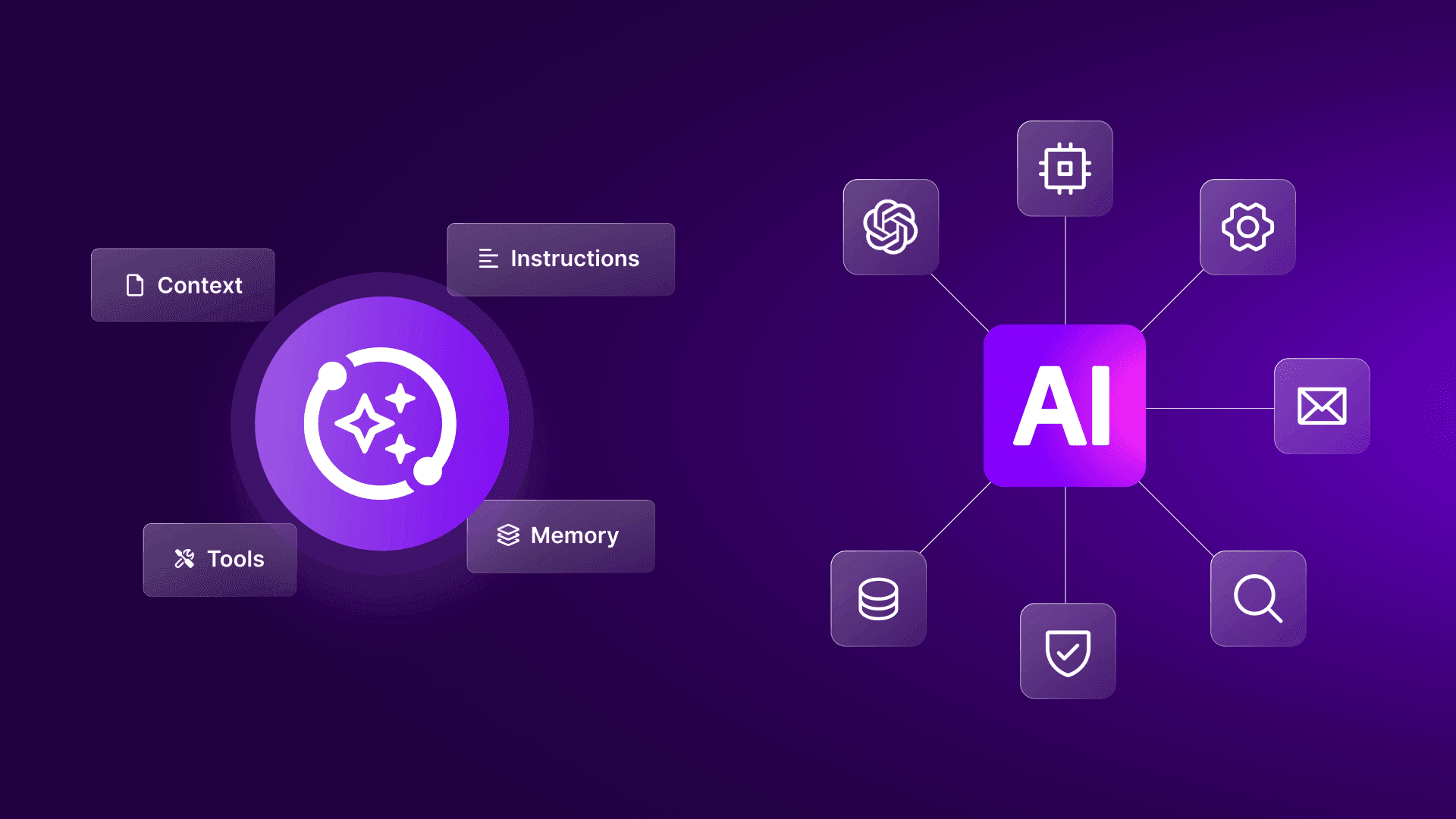

Agentic AI refers to processes where an artificial intelligence model takes actions based on reasoning about content or context, rather than just format or position.

These systems handle variability in inputs, make conditional decisions based on meaning, and route exceptions without requiring a human in the loop.

In practice, agentic AI rarely exists as a standalone product. It usually involves AI models embedded within an orchestration layer.

Both approaches are distinct from simpler trigger action automation, tools like basic webhooks or linear connectors that execute a fixed sequence when a condition is met.

Those tools move data between systems but make no decisions. RPA mimics UI interactions across multi step processes. Agentic AI acts on the meaning of content, not just its presence or format, a distinction explored further in this .

RPA solves for consistency

RPA works best when your source system, target system, and business rules remain stable. That is why teams often use it for finance operations, data transfer between older systems, and UI-based tasks where APIs are unavailable.

In those cases, traditional RPA creates value because it reduces human handling across high-volume, repetitive operations. The logic is explicit, testable, and usually straightforward to audit.

The weakness appears when the environment shifts. A renamed field, a revised login sequence, or an extra confirmation screen can stop the process immediately. That failure mode is visible, but it is still expensive.

Agentic AI solves for variability

Agentic AI fits tasks where the important signal lives in meaning rather than structure. The system needs to interpret language, compare context, or decide between multiple valid paths.

That makes it useful for document review, exception classification, email triage, and issue routing. In those scenarios, agentic AI handles ambiguity that rule-based logic cannot cover without exploding into brittle conditional trees.

The tradeoff is different. Instead of breaking loudly when a selector changes, the model can produce output that looks valid but misses the business context. That demands tighter validation, stronger fallback rules, and better observability.

Why the comparison often goes wrong

Most comparisons reduce this to speed versus intelligence, which misses the point. The real question is simpler: does this step depend on a fixed rule, or does it depend on what something means?

Once you ask that instead, the answer usually becomes obvious.

A better question is not whether one technology is more advanced. It is whether the step depends on fixed structure, or whether it depends on judgment.

Once you frame the problem that way, the architecture becomes clearer.

When to use RPA and when to use agentic AI

The choice between RPA and agentic AI comes down to one thing: what does your process actually depend on?

If it depends on structure, RPA works. If it depends on meaning, you need agentic AI.

The boundary in practice: Invoice processing

Consider a vendor invoice process. Extracting data from a standardized template and pushing it into an ERP system is an ideal use case for RPA. The inputs are known, the schema is fixed, and the output is deterministic.

However, the process breaks when a new vendor submits a non-standard invoice format, or when there is a discrepancy between the line items and the original purchase order.

When RPA handles exceptions: The bot fails to locate the expected fields. It drops the item into an exception queue, requiring manual human review.

When agentic AI handles exceptions: The model reads the document, understands the context of the discrepancy, and can make a conditional decision, such as returning the invoice to the vendor with a specific correction request.

This same principle applies to customer support triage or content review pipelines. Stable inputs require structured rules; ambiguous inputs require interpretive logic.

Customer operations: clear intent vs messy language

A customer message that contains a known order number and a standard cancellation request is not difficult to automate. RPA or API-based logic can validate the fields, locate the order, and send the next action to the right system.

A message that says, "The invoice looks wrong, and the service was down again last Thursday," is different. That requires interpretation. The system has to identify whether the customer is disputing billing, reporting an incident, or asking for escalation.

This is where the difference between RPA and AI becomes operational, not theoretical. One follows fields and rules. The other interprets intent and context.

Internal operations: system glue vs decision layers

Many enterprise teams still rely on older systems that expose inconsistent APIs, or no API at all. RPA remains useful in these environments because it can bridge gaps without waiting for a replacement project.

At the same time, decision-heavy steps rarely stay stable for long. Approval logic changes. Compliance language shifts.

Categories multiply. A rigid rules engine becomes expensive to maintain because every edge case forces another branch.

The simplest decision rule is this: if your inputs are consistent and the logic does not change, use RPA. If the inputs vary or the decision depends on what something means rather than where it sits, use agentic AI.

If both are true in the same workflow, the design question is not which tool to pick. It is where the handoff between them sits.

The real costs of each approach

Every automation paradigm carries operational costs. Transitioning from one to the other simply shifts where the maintenance burden sits.

Anyone building a serious needs to account for these realities.

The RPA maintenance burden

RPA is inexpensive to run at scale, but it is highly sensitive to environmental changes. UI updates, API deprecations, or slight shifts in data structures break the bots. The cost is measured in developer hours spent patching fragile connections and operational hours spent clearing exception queues.

This creates a predictable ownership pattern. Automation teams spend less time designing new business logic and more time preserving existing behavior. When the estate grows, this patching work starts to crowd out new delivery.

RPA also depends on disciplined change management in adjacent systems. If application owners update a screen without notifying the automation team, the failure can propagate before anyone notices.

The agentic AI iteration cycle

Agentic AI handles unstructured inputs well, but it introduces different complexities. It requires iteration. AI modules require prompt tuning. The first version of a prompt usually handles the common case well but produces variable output on edge cases.

Expect two to four refinement cycles before an AI module returns output consistent enough for production. Furthermore, building an audit trail for AI decisions requires deliberate design, whereas RPA logging is often built in.

The operating cost is less about patching selectors and more about improving instructions, validation, and exception handling. That means the work shifts from brittle UI maintenance to scenario design and model governance.

Latency and token cost also matter. If every low-value task routes through a large reasoning model, the architecture becomes expensive without improving outcomes. The model has to earn its place.

Hidden cost categories leaders often miss

The visible cost of both approaches is only part of the picture. The harder costs usually surface later:

Testing overhead: Hybrid logic requires more test cases because structure and meaning can fail in different ways.

Audit design: AI-based decisions need explicit logging of prompts, outputs, confidence checks, and fallbacks.

Team boundaries: RPA often sits with automation specialists, while AI decisions pull in operations, compliance, and domain owners.

Failure detection: A broken selector fails loudly. A plausible but wrong summary may not fail at all.

Approach | Ideal use case | Primary failure mode | Hidden cost

|

RPA | High-volume, structured data transfer | Fails immediately when UI or API changes | Constant patching and maintenance |

Agentic AI | Ambiguous inputs, content-based routing | Returns semantically incorrect data silently | Prompt tuning and exception design |

One cost that almost never shows up in planning is what happens when you move too fast.

Drop an AI step into a workflow without locking down the output format and the next module cannot read it, but nothing flags an error. Add an agentic step without a fallback and records go missing silently.

Try to replace your whole RPA estate at once and you often end up with the same fragility you started with, just in a shinier wrapper.

None of this means the transition is not worth doing. It means doing it in stages is not caution, it is the faster path.

Why most teams are moving to hybrid RPA and AI setups

The operational question is not whether to replace RPA with AI, but how to build the layer that connects them. Most teams are already moving toward hybrid architectures, and this example shows what that looks like in practice.

In a hybrid process, RPA handles the stable, deterministic tasks, while AI models classify unstructured documents, extract variable data, and route exceptions.

What a good hybrid design looks like

A strong hybrid architecture separates responsibilities clearly:

RPA or API modules gather and move structured data.

AI modules interpret text, classify intent, or generate structured output from messy input.

Deterministic logic validates AI output before it reaches a critical system.

Human review captures uncertain cases instead of forcing false confidence.

Fully autonomous agents that run an entire process start to finish without any human checks do exist in some larger organizations. But getting there takes serious investment in testing and monitoring that most teams at this size are not set up for yet.

The more realistic near term move is adding agentic steps at the specific points where your current logic breaks down, and keeping structure everywhere else.

That matters because it contains the risk. AI handles the judgment calls without becoming the thing every downstream step depends on to get it right.

Three reasons hybrid automation programs fail

Hybrid designs tend to fail in three places:

First: Teams skip schema enforcement. They ask a model to "understand" an input, but never define the exact structure required by the next module.

Second: They route every exception to AI even when a fixed rule would be more reliable.

Third: They lack visibility across connected scenarios, so local changes create downstream issues.

This is why agentic AI vs traditional RPA should not be framed as a replacement. The real design task is deciding where interpretive logic belongs, and where it does not.

How to use Make to implement this

Managing a mix of RPA and AI steps is hard when you cannot see where one ends and the other begins. Make makes that boundary visible.

When you build AI agents and structured processes in Make, the entire process lives on a visual canvas.

Visual debugging and the handoff

The exact moment a process breaks is visible in Make because every module-level output is inspectable. When a structured module fails or an AI module returns unexpected output, the error is not buried in a server log. It is visible right on the canvas.

This is critical for hybrid processes, where the handoff between the AI model and the deterministic rule is usually the point of failure.

You can drop an AI step in at the exact point where your current logic breaks, without touching the rest of the workflow.

Make connects to more than 3,000 apps, so whatever you have already built stays in place.

Multi-model routing

Different tasks require different models. A document classification step might prioritize speed and cost, while a complex decision-routing step requires a model with advanced reasoning capabilities.

Make allows you to route different tasks within a single scenario to different AI models, ensuring you match the right capability to the right step without building entirely separate processes.

That matters for production design. You can reserve expensive reasoning models for high-ambiguity steps and use lighter models where the task is narrow. The scenario stays coherent, and the tradeoffs remain visible.

Make Grid for enterprise visibility

When your automation spans multiple connected workflows, it gets hard to know what affects what.

Using Make Grid allows IT and operations leads to see their entire scenario ecosystem in one view.

Make Grid maps which scenarios connect, where data flows between them, and how changes in one process impact others down the line. It serves as the governance layer that helps you manage automation sprawl.

This matters even more in hybrid environments. One scenario may validate invoice data, another may classify exceptions, and a third may write final results into a finance system. Make Grid helps teams understand those relationships before a local change becomes a systemic issue.

Designing safer AI outputs in Make

The safest pattern is not to let model output pass directly into a critical system. Instead, use Make to enforce structure.

Ask the model for a defined JSON shape, validate the response, then branch conditionally when required fields are missing or confidence is low.

This is where Make's scenario logic becomes useful: you can inspect the output, compare values, and send uncertain cases to review without losing context.

When to involve Maia by Make

For teams designing new AI-supported scenarios, Maia by Make can help build visually inside the Scenario Builder. That is useful when you need to prototype the orchestration logic itself, not just call a model from a single module.

The important point is transparency. The resulting scenario remains visible and editable on the canvas, so teams can inspect the logic, adjust mappings, and harden the design for production.

A practical decision framework

If you are evaluating RPA vs Agentic AI for a real business process, start with the failure pattern rather than the vendor category.

Use RPA when the process is stable

RPA is still the right choice when:

The interface or system structure changes infrequently.

The input format is highly standardized.

A stopped process is safer than a misinterpreted one.

The business rules rarely require contextual judgment.

These are not outdated use cases. They are exactly where deterministic automation remains strongest.

Use agentic AI when the work depends on meaning

Agentic AI becomes valuable when:

Inputs arrive in multiple formats.

Meaning matters more than field position.

Exceptions are frequent and expensive to triage manually.

The process needs content-aware routing or summarization before action.

That does not remove the need for structure. It increases the need for validation around the model's output.

Use a hybrid design when both conditions exist

Most enterprise processes contain both stable and ambiguous stages. That is why hybrid architecture keeps winning in practice.

A common pattern looks like this:

Capture or receive data through a stable integration.

Pass only the ambiguous content to an AI module.

Validate the returned structure.

Route low-confidence cases for human review.

Write approved results back through deterministic logic.

This design keeps the AI focused on the problem it is best suited to solve.

Conclusion

The debate between RPA vs agentic AI is a distraction from the real work of process design. Structured automation excels at predictable execution. Agentic AI earns its place when you need to handle variability, interpret context, and route exceptions.

The most resilient processes combine both, using a visual orchestration layer to clearly define where rules end and reasoning begins.

Start by mapping one existing process that frequently breaks or generates high exception volumes. Find the specific step where the deterministic logic fails. Map your first scenario in Make, insert an AI step at that exact point of failure, and see how the visual canvas handles the transition between structure and adaptability.

Ready to build your first hybrid scenario? , and see how the visual canvas handles the handoff between structured automation and AI.

FAQ

1. How do you start building an RPA vs Agentic AI scenario in Make without overengineering it?

Start by isolating one failure point in an existing scenario, usually the step where fixed rules break on variable input. Keep the deterministic modules for system access and hand only the ambiguous content to an AI module, then validate the returned structure before the next operation.

2. What is the most common failure point when combining RPA and agentic logic in Make?

The handoff usually fails when model output does not match the schema expected by the next module. In Make, define a strict output structure, inspect bundles after the AI step, and branch low-confidence or malformed results to a review path instead of forcing them downstream.

3. Does agentic AI replace traditional RPA once the model is good enough?

No. That is the most common oversimplification in RPA vs Agentic AI discussions. RPA is still the right tool for stable, high volume work where you need predictable outputs every time. AI belongs in the steps where the outcome depends on what something means, not just what it looks like. Getting a better model does not change that division. It just means the AI handles its part more reliably.

4. Can Make support enterprise evaluation of agentic architectures, or is it better suited to lighter use cases?

Make supports serious evaluation because the visual canvas exposes scenario logic, mappings, and module outputs in a way teams can inspect quickly. When you need broader visibility, Make Grid shows how connected scenarios interact, which helps technical leaders assess governance and blast radius before scaling.

5. How do you scale an initial hybrid scenario after the first use case works?

Split the design into reusable scenarios by responsibility: ingestion, interpretation, validation, and system update. That lets you add conditional routing, connect more systems, and introduce Make AI Agents or different models only where the logic justifies the extra complexity.

6. Where is this architecture heading over the next 12 to 18 months?

The direction is not full replacement of deterministic automation. Teams are moving toward architectures where AI handles interpretation inside bounded scenarios, while structured modules, validation layers, and Make Grid provide control, auditability, and operational visibility.