Apr 14, 2026 | 8 minutes

LLM agents: From demo to full automation (2026)

An LLM agent that runs in a demo is built for ideal conditions. Here's what it takes to make one work in yours in practice.

The screen-share has just ended. For twenty minutes you watched LLM agents read a support email, pull an account record, and stage a draft response.

The vendor asked if you had questions. You said no.

But you do. Not about whether it worked, as it clearly worked in the demo. The question is what happens when your actual intake process is involved.

Incomplete CRM data. Tickets arriving in three different formats. A team that needs to trust whatever this produces.

That gap between a controlled demo and a production scenario is where most implementations stall.

Using agents at scale requires setting structural guardrails and clear human oversight.

This article shows you how to build those boundaries and make the agent operational. Gartner forecasts that 40% of enterprise applications will embed task-specific AI agents by end of 2026, up from less than 5% today.

The organisations building that competency now will have a serious advantage over those that wait.

What are LLM agents in an operational context?

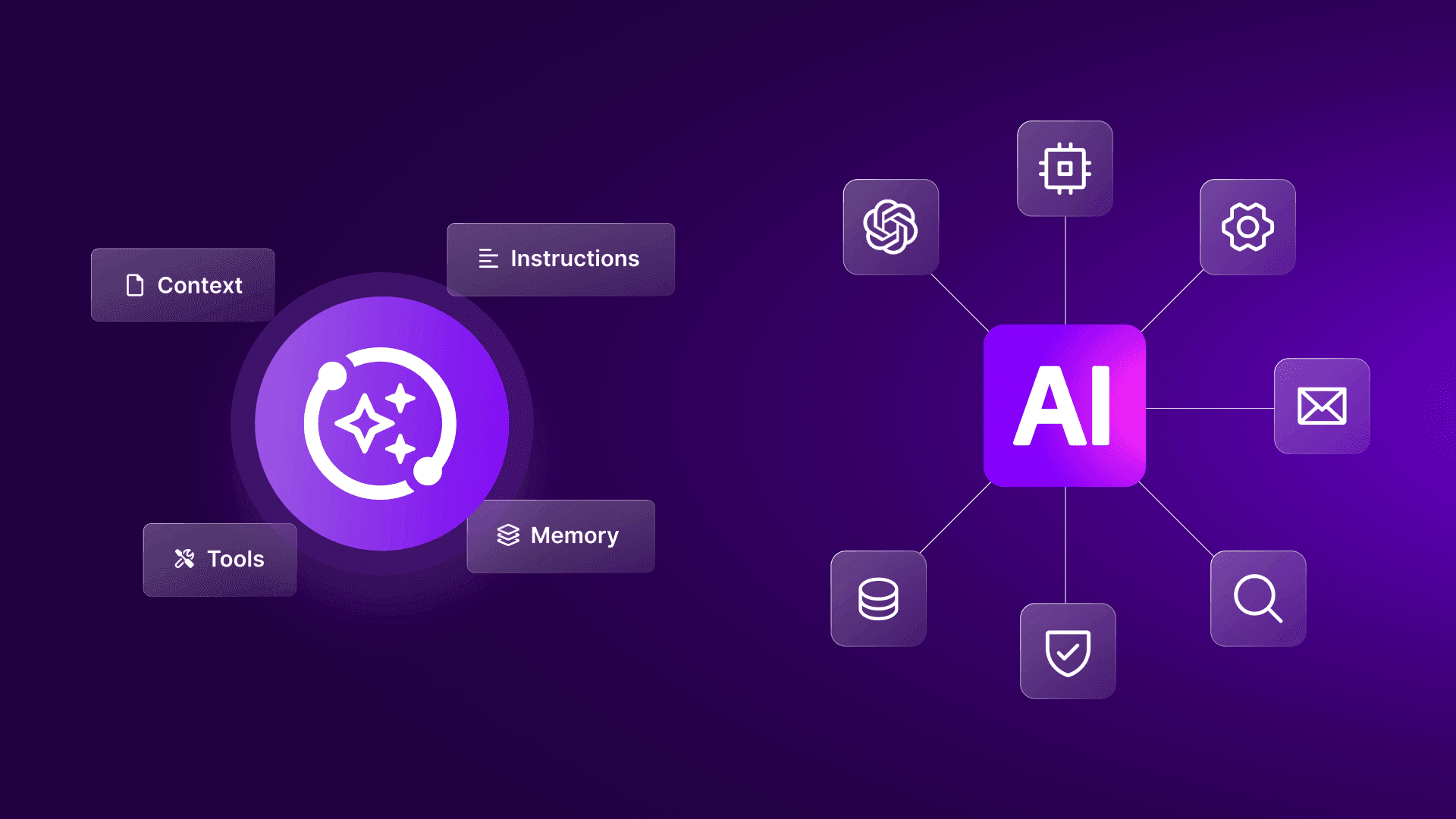

An LLM agent is not a chatbot, and it is not a basic rule. An LLM agent is a system that uses a large language model to interpret unstructured input, reason over it, and trigger actions in connected business tools, all within boundaries you define.

You'll want to distinguish it from a simple AI call, which is a module that sends text to a model and returns a summary, and from fully autonomous AI systems, which remain the wrong goal for most operational environments.

The defining characteristic of an LLM agent is its ability to take action in connected systems based on its output. In an operational context, an agent reads input from one or more sources, reasons over that unstructured data processing step, produces structured output, and triggers a downstream action.

Crucially, it operates within a defined boundary that keeps human oversight intact. Consider the difference between traditional automation and agentic automation:

Feature | Traditional rule-based automation | LLM agent automation

|

Input handling | Requires structured data (forms, exact match fields) | Processes unstructured data processing (free text, emails) |

Logic execution | Follows deterministic conditional logic | Uses contextual reasoning to determine intent |

Failure state | Halts or routes to a static error queue when rules fail | Adapts routing based on confidence threshold automation |

Best used for | Predictable, static scenarios like deal stage updates | Highly variable inputs like triage and classification |

When evaluating agentic process automation, the objective is not replacing existing rules.

It is identifying the exact points in your scenario where variability in the input exceeds what static rules can manage.

How do you implement LLM agents in business?

You implement LLM agents by embedding them as a reasoning step inside a broader automation scenario that handles context retrieval, structured output, and human review.

A demo usually isolates one reasoning task. Production scenarios don't.

They combine model selection, prompt design, authentication, field mapping, retries, logging, fallback paths, and exception handling.

The larger risk often sits in the surrounding scenario, which is incomplete context retrieval, bad bundle mapping, or a missing branch for low-confidence outcomes, not in the model output itself.

To understand the architecture of an AI agent scenario, look at a standard intake process: inbound support ticket triage.

Currently, a human reads the ticket, checks Salesforce or HubSpot for account tier, classifies the urgency, and routes it to the correct technical queue.

When you embed an LLM agent into this scenario, the architecture looks like this:

The trigger: A new ticket arrives via email or form submission.

Context retrieval: A module queries your CRM to pull the customer's tier, recent account history, and active contracts.

The agent step: The LLM agent receives the ticket text and the CRM data. It classifies the issue type, determines urgency, and drafts a preliminary response.

The decision boundary: The agent outputs a confidence threshold automation alongside its classification.

Downstream routing: If the confidence score is above 90%, the system routes the ticket directly to the specialized queue. If it falls below the threshold, the system triggers a Slack message to a human triage channel, attaching the draft and the agent's reasoning.

This separation keeps the reasoning and the execution distinct. The agent does not need to know how to authenticate into Salesforce or format a Slack API call.

ke handles the connective tissue, allowing the agent to focus purely on the reasoning task.

Where LLM agents fit, and where they don't

Language model agents perform best when the input varies, but the required outcome stays bounded.

Support triage, inbound lead qualification, policy review, and compliance intake all fit that pattern.

They perform poorly when the task has no clear success criteria or when the downstream action carries material risk without review.

If the model can produce multiple plausible outputs and your team cannot define a reliable acceptance rule, the scenario needs more structure before you automate it.

Structuring outputs for downstream systems

Operational agents should return structured output, not prose alone.

If the next module needs issue_type, priority, confidence_score, and draft_reply, require those fields explicitly and validate them before routing.

This matters most when scenarios connect multiple systems. A support platform may accept free text, but your reporting layer, SLA logic, and escalation rules still depend on a consistent structure.

If one field arrives blank, you want the scenario to branch immediately rather than pass a partial payload downstream.

Anticipating failure modes

Building a reliable agent in production takes longer than demos suggest.

In the first two to three weeks, expect these specific failure states, not as signs the architecture is wrong, but as the normal cost of calibrating against real data.

Failure mode | What triggers it | Where it surfaces |

Agent confidently miscategorizes an input | Edge cases the prompt never saw — a ticket in a second language, a subject line matching two categories, an account type absent from your test set | Downstream, when a human notices the wrong team received it. The output looked correct. It routed cleanly. |

Structured output drops a required field | Short or unusually formatted inputs cause the model to return a partial payload | The incomplete bundle reaches the next module, errors silently, or writes a blank value to a system of record |

Fallback path fails silently | The branch condition was never tested against a real low-confidence output | The ticket disappears entirely. No Slack message fires. No one knows it existed. |

None of these means the approach is flawed. They mean your first two weeks in production are a calibration period, not a launch.

Monitor every output manually, test every branch explicitly, and treat the first real-data failures as the most valuable prompt data you'll collect.

Failure testing before launch

Before launch, run a controlled test set through the full scenario. Include clean inputs, ambiguous inputs, malformed payloads, duplicate submissions, and samples with missing context.

You’re not only testing the model. You are testing whether each module branches correctly when the model behaves unexpectedly.

Teams often skip negative testing because the happy path already works. That creates brittle deployments. In practice, the value of the scenario depends on how well it handles the worst 10% of cases, not the best 90%.

LLM agents also carry structural constraints that don't disappear with better prompts.

Context windows limit how much source data the agent can process in a single pass

Prompt sensitivity means small wording changes can shift outputs, so version control on prompts is not optional

Latency increases with model size, which matters when SLAs depend on sub-minute response times

Cost scales with token volume, so high-throughput scenarios need model selection that balances accuracy against per-call expense

These are engineering constraints you account for in scenario design, not reasons to avoid deployment.

How do you set the human-in-the-loop boundary for LLM agents?

You set the boundary by how confident you are in that agent to perform the task, and below that threshold, the agent stops acting autonomously and routes to a human reviewer.

The decision boundary between the agent and your team is the most important design choice in your system. This is not an ethical afterthought; it is a structural requirement for reliable operations.

Implementing human-in-the-loop automation requires defining exactly what happens when the agent encounters ambiguity. You can't assume the agent will accurately flag its own hallucinations.

Instead, you design the scenario to catch low-confidence outputs before they reach a customer or update a master system of record.

Before you build the review queue, three design questions need answers. Where does the confidence threshold sit? The exact score below which the agent stops acting autonomously?

What does the human review queue look like, and how much context does the reviewer see without having to go looking for it?

And how do you track accuracy over time so you can expand or contract the agent's autonomous scope based on evidence rather than instinct?

These aren't configuration details, they're the structural decisions that determine whether the deployment holds up at week six or quietly degrades.

Earning trust in AI agents happens through verifiable accuracy, not vendor promises. Start with a high threshold for human review.

As the agent proves reliable against your specific data schema, you gradually lower the threshold, expanding its autonomous scope.

Choosing the right review triggers

Confidence score alone should not control the review. Some categories deserve mandatory review regardless of score: legal requests, account cancellations, security incidents, and any case that could trigger customer-facing commitments.

A stronger pattern uses layered review triggers. Route to a human when confidence is low, when the issue falls into a sensitive class, or when the output conflicts with source data.

That design catches more harmful errors than a threshold-only model.

Building a review queue that people will use

Human review fails when the reviewer has to reconstruct context manually. Show the original input, retrieved account data, the agent's structured output, and the reasoning summary in one place.

If reviewers must jump between tools, your fallback path becomes a productivity drain.

Reviewers also need a fast correction mechanism. Let them approve, edit, or reroute without rebuilding the context themselves.

Feed those corrections back as the most valuable dataset for improving prompts, routing logic, and escalation criteria.

What are the operational benefits of LLM agents?

The main benefits are faster classification, higher queue quality, and structured output that feeds directly into reporting and SLA logic.

When you deploy an agent for AI-assisted triage, the advantage is not a generic increase in efficiency.

The tangible benefit is that the operations manager who previously spent 90 minutes each morning manually reading 80 tickets is now reviewing 15 edge cases and one accuracy report.

First-response times drop because automated ticket classification no longer depends on a human being physically present at their desk.

The agent processes the input, applies the logic, and stages the work instantly. To realize these benefits, you must fulfill three prerequisites:

Clean input data from your source systems.

A well-scoped, tested prompt that explicitly defines the required output structure.

A tested fallback scenario for low-confidence routing.

Without these, adding an agent merely accelerates the creation of an unstructured mess.

Better queue quality, not just faster routing

The strongest operational gain is often queue quality.

In the ticket triage scenario, instead of flooding the team with 80 mixed-priority requests, the scenario separates billing issues, product defects, access requests, and churn signals before a person touches the queue.

That changes staffing decisions. Team leads can assign specialized reviewers to the categories that need domain judgment, while lower-risk classes move through a controlled automated path.

The result is better response quality and more predictable handling time.

More reliable reporting and auditability

Structured outputs also improve reporting. In the triage scenario, when issue type, risk level, sentiment, and escalation reason arrive as validated fields, you can track error rates and handoff quality over time.

That audit trail matters for technical leaders evaluating the deployment as they need evidence on where the agent performed well, where it failed, and whether the review boundary actually reduced risk.

Without it, the deployment remains a pilot, not an operational capability.

Where are LLM agents used in real-world operations?

LLM agents are used in revenue operations, customer success, internal service desks, and compliance workflows where unstructured input creates manual bottlenecks.

Common implementation patterns include:

Revenue operations: Parse unstructured inbound lead data, extract company details, and pre-populate CRM fields before a rep touches the record.

Customer success: Separate generic how-to questions from active churn risks, drafting responses for the former while escalating the latter.

Internal service desks: Classify employee requests arriving via shared inboxes or Slack, routing password resets, procurement approvals, and access requests to the correct resolver group.

Compliance: Extract clauses from dense vendor documents, compare them against approved language, and flag deviations for specialist review.

As organizations mature, they move toward multi-model orchestration, where different agents handle different subtasks within a single scenario.

The tooling to manage this at scale is still developing, but the pattern is proven in each of these contexts individually.

How does Make help you build and manage LLM agents?

Make gives you the scenario architecture, visual debugging, and multi-model routing you need to move LLM agents from isolated experiments into production.

When you manage an agent in a production process, you need absolute clarity on what data entered the agent, what logic it applied, and what it pushed downstream.

The visual builder gives you total visibility. When an agent misbehaves in a linear tool, diagnosing the failure often means reading logs or adding debug outputs to code.

In Make's visual canvas, you see the input that entered the module, the output it produced, and the subsequent operation, all in one place. For a full breakdown of how the new agent architecture works inside the canvas, read how Make rebuilt AI Agents in the scenario builder.

This separates debugging from guessing.

Make AI Agents inside scenarios, not alongside them

Make AI Agents operate inside your scenarios. The agent acts as one module within a broader architecture that handles data retrieval, field mapping, conditional logic, error handling, and downstream actions.

This architecture matters because the agent doesn’t need to orchestrate the entire process. Make handles the API connections, the retries, and the formatting.

The agent handles the reasoning step it is uniquely suited for.

Multi-model orchestration

Different tasks have different latency, cost, and capability profiles. A classification step might run perfectly on a fast, inexpensive model, while a complex drafting step requires a heavier, more capable model.

Make enables multi-model orchestration within a single scenario. You can route specific steps to different models without rebuilding the architecture or committing to a single provider for the entire process.

This allows you to measure your own AI performance benchmarks and optimize for both cost and reliability at the module level.

Visual debugging and operational transparency

Operational transparency is where Make becomes especially useful for technical teams. When an output looks wrong, you can inspect each module, each bundle, and each branch condition without reconstructing the chain from scattered logs.

When you adjust prompt instructions, swap models, or alter routing criteria, you can compare results against prior operations and see exactly what changed.

Teams evaluating should treat this visibility as a core requirement, not a convenience.

Planning for scale across teams

A pilot often starts with one queue and one model. Production expansion rarely stays that contained.

As logic splits across regions, business units, and escalation classes, you can observe the full system in and manage growing scenario portfolios without losing visibility into individual agent steps.

Teams evaluating often find that agents deliver the most value when they sit inside a wider operational design rather than as isolated AI features.

Conclusion

The shift from rule-based automation to agent-assisted processes is not a wholesale replacement of your current stack.

Map the exact systems involved, the data required to make a decision, and the human queue that catches exceptions. Build the connective tissue first, test the prompt against real data, and let the agent earn its autonomy through verified accuracy.

If that step takes more than 30 minutes to identify, your current process is more rule-ready than it is agent-ready, and that's useful information too.

Ready to build your first agent scenario? Browse the Make Library of Agents for ready-made triage, routing, and classification examples built for real workflows.

Start for free on Make and connect your first reasoning step to the tools your team already uses.

FAQs

1. What is the best way to start implementing LLM Agents in Make without overbuilding the first scenario?

Start with one bounded classification or routing decision, such as support triage or inbound lead qualification. Pull only the context the model needs, require structured output, and add a human review branch before any customer-facing or system-of-record update.

2. What usually breaks first when teams move LLM Agents from a demo into production?

The first failure point is often not the model output itself, but the scenario around it: missing fields, bad mapping, or a fallback branch that never fires. In Make, inspect the bundles at each module and test low-confidence, malformed, and duplicate inputs before launch so the exception path works under real conditions.

3. Do LLM agents replace rules-based automation once they are accurate enough?

No. They handle variability in unstructured input, but deterministic logic still matters for approvals, routing rules, validation, and downstream updates. The strongest design uses the agent for judgment where language is messy, then lets the rest of the scenario enforce structure.

4. How do technical teams evaluate whether Make can support production-grade LLM Agents?

The question to ask is whether the platform shows you what entered each module, what came out, and what branched downstream, without requiring custom logging. In Make, that visibility is built into the canvas. You can inspect reasoning steps and trace failures without building observability infrastructure around the agent.

5. How can you scale an initial LLM agent build to handle more volume and more systems?

Start by separating intake, reasoning, review, and downstream actions into distinct modules. As volume grows, add conditional routing by issue type or region, assign different models to different steps, and monitor the full architecture in Make Grid.

6. Where are LLM Agents heading over the next 12 to 18 months for enterprise automation teams?

The direction is toward narrower, better-governed agent roles inside larger operational systems, not open-ended autonomy. Teams will combine Make AI Agents, structured validation, and model-specific routing so each reasoning step stays observable, measurable, and tied to a defined business boundary.